What Is Deep Learning? A Complete Guide (2026)

- Deep learning is a subset of machine learning that uses multi-layered neural networks to automatically learn patterns from data — no manual feature engineering required.

- The core building blocks are neurons, layers, activation functions, and backpropagation — understanding these four unlocks every major architecture.

- Modern deep learning frameworks (PyTorch, TensorFlow) make it approachable, but pitfalls like overfitting and vanishing gradients can silently kill your model.

- The global deep learning market is valued at ~$65B in 2026 and growing at a ~35% CAGR — making this one of the most commercially critical technologies to understand today.

Introduction

Every time you ask a voice assistant a question, unlock your phone with your face, or receive a surprisingly accurate product recommendation, deep learning is doing the heavy lifting behind the scenes. It is the engine powering the most impressive AI breakthroughs of our era — from large language models like GPT and Claude, to self-driving car perception stacks, to cancer-detection systems that rival specialist physicians.

Yet for many developers, the term “deep learning” still feels like a black box wrapped in math. What exactly is happening when a model “learns”? Why does depth matter? And how do you go from a simple array of numbers to a system that can describe the content of a photograph?

This guide answers all of that. You will walk away understanding what deep learning is, how a neural network is structured and trained, which architectures dominate in 2026, and what common mistakes trip up practitioners at every level. Whether you are a software engineer curious about AI, or a data professional looking to build your first model, this is your starting point.

You will get the most out of this article with: basic Python familiarity, a conceptual understanding of what a function and a variable are, and some exposure to what "machine learning" means at a high level. You do not need a maths degree — we will explain the key ideas intuitively and point you to deeper resources where they matter.

The AI Family Tree: Where Deep Learning Lives

Before diving in, it helps to know where deep learning sits in the broader landscape. Artificial intelligence is the overarching goal of making machines intelligent. Machine learning is a technique for achieving that goal — it trains algorithms on data instead of hand-coding explicit rules. Deep learning is a specialized subset of machine learning that achieves this through neural networks with many layers.

Artificial Intelligence

└── Machine Learning

└── Deep Learning

└── (CNNs, RNNs, Transformers, Diffusion Models, ...)Traditional machine learning often requires a human expert to hand-engineer features — for example, writing code to extract edges from an image before feeding it to a classifier. Deep learning models learn these features automatically from raw data, layer by layer. This is why they excel at images, audio, and text where hand-crafting features is impractical.

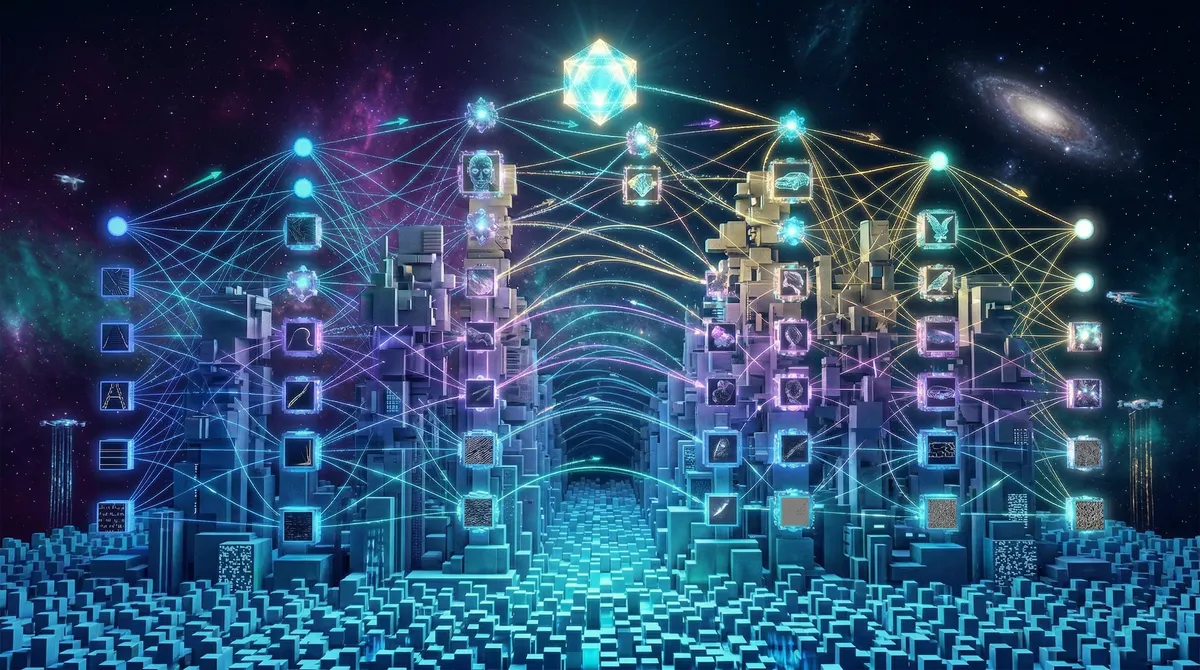

How a Neural Network Is Structured

A neural network is a computational graph loosely inspired by the human brain. It is made up of interconnected nodes (neurons) arranged in layers that progressively transform input data into a final prediction or output.

Every network has three main layer types:

- Input Layer — Receives raw data (pixel values, word embeddings, sensor readings, etc.)

- Hidden Layers — One or more intermediate layers where features are progressively abstracted

- Output Layer — Produces the final result (a class label, a probability, a generated token, etc.)

What makes a network “deep” is simply having multiple hidden layers. A network with 2–3 hidden layers is shallow; a modern transformer might have 96 or more. Each additional layer allows the model to represent increasingly abstract concepts: early layers in an image model learn edges and textures, middle layers learn shapes and object parts, and later layers learn to distinguish a cat from a dog.

Neurons and Weights

Each neuron does something simple: it takes its inputs, multiplies each one by a learned weight, sums them up, adds a bias, and passes the result through an activation function. Mathematically:

output = activation(w₁x₁ + w₂x₂ + ... + wₙxₙ + b)The weights and biases are the parameters the network learns during training. A typical modern model has millions — sometimes hundreds of billions — of these parameters.

Activation Functions

An activation function introduces non-linearity. Without it, stacking layers would be mathematically equivalent to having just one layer — no depth advantage at all. The most commonly used activations in 2026 are:

| Activation | Formula | Where Used |

|---|---|---|

| ReLU | max(0, x) | Convolutional networks, dense layers |

| GELU | x · Φ(x) | Transformers (GPT, BERT) |

| Sigmoid | 1 / (1 + e⁻ˣ) | Binary output layers |

| Softmax | eˣⁱ / Σeˣʲ | Multi-class output layers |

How a Network Learns: Training and Backpropagation

Understanding training is the single biggest conceptual leap in deep learning. It transforms the question from “how is a network structured?” to “how does it get good at a task?”

Training is an iterative optimization loop. At each step, the model makes a prediction, a loss function measures how wrong it was, and an algorithm called backpropagation calculates how to update every weight to reduce that error.

The Forward Pass

Data flows from the input layer through every hidden layer, with each neuron applying its weights and activation function, until it reaches the output layer and produces a prediction.

The Loss Function

The loss function quantifies the gap between the prediction and the ground truth. For classification tasks, cross-entropy loss is standard. For regression, mean squared error is common. The goal of training is to minimize this number.

Backpropagation and Gradients

Backpropagation uses the chain rule of calculus to calculate how much each weight contributed to the overall error. This gives us a gradient — a direction and magnitude telling us how to nudge each weight to reduce the loss.

The Optimizer

The optimizer applies those gradient signals to actually update the weights. The classic algorithm is Stochastic Gradient Descent (SGD), but in practice most modern work uses Adam or AdamW, which adapt the learning rate per parameter and converge much faster.

# PyTorch: a minimal training loop (PyTorch 2.x)

import torch

import torch.nn as nn

model = nn.Sequential(

nn.Linear(784, 256),

nn.ReLU(),

nn.Linear(256, 128),

nn.ReLU(),

nn.Linear(128, 10) # 10 output classes

)

optimizer = torch.optim.AdamW(model.parameters(), lr=1e-3, weight_decay=1e-2)

loss_fn = nn.CrossEntropyLoss()

for batch_X, batch_y in dataloader:

optimizer.zero_grad() # reset gradients

predictions = model(batch_X) # forward pass

loss = loss_fn(predictions, batch_y)

loss.backward() # backpropagation

optimizer.step() # update weightsMajor Deep Learning Architectures

Over the past decade, the field has converged on a handful of dominant architectures, each suited to different data modalities.

- Designed for spatial data (images, video)

- Use sliding filter kernels to detect local patterns

- Translation-invariant: detects features regardless of position

- Key models: ResNet, EfficientNet, YOLO

- Best for: image classification, object detection, medical imaging

- Designed for sequential data (text, audio, code)

- Use self-attention to model long-range dependencies

- Parallelizable — dramatically faster to train than RNNs

- Key models: GPT, BERT, T5, Vision Transformer (ViT)

- Best for: NLP, code generation, multimodal tasks

RNNs and LSTMs were the workhorse of sequential data before transformers, and still appear in time-series forecasting and edge deployments where low memory is critical. Diffusion models — the backbone of Stable Diffusion and similar image generators — learn to iteratively denoise random noise into coherent data, and have become a dominant generative paradigm in 2025–2026.

Start with a small feedforward network (also called an MLP) to solidify training fundamentals, then move to CNNs for computer vision or Transformers for text. Both have rich pre-trained model ecosystems on Hugging Face and PyTorch Hub, so you can fine-tune them for your own task without training from scratch.

Deep Learning in the Real World

The practical footprint of deep learning is enormous. Here is a non-exhaustive snapshot of domains where it is actively deployed today:

- Healthcare — MRI and CT scan analysis, cancer detection, genomic analysis, drug discovery

- Autonomous Vehicles — Object detection and path planning in real time (e.g., YOLO-based perception stacks)

- Finance — Fraud detection, algorithmic trading, credit risk modeling

- NLP — Machine translation, chatbots, code generation, document summarization

- Entertainment — Content recommendation engines, voice cloning, video synthesis

Building Your First Model: A Practical Walkthrough

Let’s walk through a practical deep learning workflow from raw data to a working model, using the MNIST handwritten digit dataset as an example.

# A complete, runnable MNIST example (PyTorch 2.x)

import torch

import torch.nn as nn

from torchvision import datasets, transforms

from torch.utils.data import DataLoader

# 1. Data

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307,), (0.3081,))])

train_data = datasets.MNIST('.', train=True, download=True, transform=transform)

val_data = datasets.MNIST('.', train=False, download=True, transform=transform)

train_loader = DataLoader(train_data, batch_size=64, shuffle=True)

val_loader = DataLoader(val_data, batch_size=64)

# 2. Model

model = nn.Sequential(

nn.Flatten(),

nn.Linear(784, 256), nn.ReLU(), nn.Dropout(0.2),

nn.Linear(256, 128), nn.ReLU(), nn.Dropout(0.2),

nn.Linear(128, 10)

)

# 3. Training setup

optimizer = torch.optim.AdamW(model.parameters(), lr=1e-3)

loss_fn = nn.CrossEntropyLoss()

scheduler = torch.optim.lr_scheduler.CosineAnnealingLR(optimizer, T_max=10)

# 4. Train loop

for epoch in range(10):

model.train()

for X, y in train_loader:

optimizer.zero_grad()

loss = loss_fn(model(X), y)

loss.backward()

optimizer.step()

scheduler.step()

# Validation

model.eval()

correct = 0

with torch.no_grad():

for X, y in val_loader:

correct += (model(X).argmax(1) == y).sum().item()

print(f"Epoch {epoch+1}: Val Accuracy = {correct / len(val_data) * 100:.2f}%")

# Expected output after 10 epochs: ~98.5% accuracyCommon Pitfalls and Troubleshooting

Even experienced practitioners run into these problems regularly. Knowing them in advance will save you days of debugging.

Overfitting happens when your model memorizes the training data instead of learning to generalize. You'll see training accuracy climbing while validation accuracy plateaus or falls. Fix it by adding Dropout layers, applying weight decay (L2 regularization) via AdamW, using data augmentation, or reducing model capacity. Early stopping — halting training when validation loss stops improving — is also highly effective.

During backpropagation through many layers, gradients can shrink exponentially and approach zero — meaning early layers stop learning entirely. This is why deep networks with sigmoid or tanh activations often fail to train. The solution stack: use ReLU or GELU activations (their gradients don't saturate), apply Batch Normalization or Layer Normalization between layers, use residual connections (as in ResNet) that provide gradient highways skipping layers, and initialize weights with He initialization for ReLU layers.

Data leakage occurs when information from your test set contaminates your training process — for example, normalizing your entire dataset before splitting it (so test statistics influence training normalization). The result is inflated evaluation metrics that don't reflect real-world performance. Always split your data before any preprocessing, and fit transformers (scalers, tokenizers, encoders) on training data only, then apply them to validation and test sets.

A learning rate that is too high causes training loss to oscillate wildly or diverge. Too low, and training converges unbearably slowly or gets stuck in local minima. A practical starting point with AdamW is 1e-3 for new models and 1e-4 or 2e-5 for fine-tuning a pre-trained model. Always use a learning rate scheduler rather than a fixed rate for the full training run.

The Modern Deep Learning Stack (2026)

Understanding the tooling ecosystem is just as important as understanding the theory:

- PyTorch 2.x — The dominant research and production framework. Dynamic computation graphs make debugging intuitive.

torch.compile()brings significant inference speedups. - TensorFlow / Keras — Strong enterprise adoption, excellent deployment tooling (TF Serving, TF Lite for mobile).

- JAX — Increasingly popular for large-scale pre-training and scientific computing; favored by Google DeepMind.

- Hugging Face Transformers — The go-to hub for pre-trained models across NLP, vision, and audio.

- Weights & Biases / MLflow — Experiment tracking, crucial for managing hyperparameter sweeps.

Deep Learning vs. Traditional Machine Learning

If you’re wondering when to reach for deep learning versus a classical approach like gradient boosting or random forests, here is the practical breakdown:

| Factor | Traditional ML | Deep Learning |

|---|---|---|

| Data size | Works well with thousands of examples | Needs tens of thousands to millions |

| Feature engineering | Often required | Learned automatically |

| Interpretability | Generally higher (decision trees, coefficients) | Generally lower (“black box”) |

| Training time | Minutes to hours on a CPU | Hours to weeks on GPUs |

| Unstructured data (images, audio, text) | Poor | Excellent |

| Tabular structured data | Often competitive or better | Often overkill |

For tabular business data, a well-tuned XGBoost model will frequently match or beat a neural network with far less complexity. Deep learning shines when the input is raw, high-dimensional, and unstructured.

Conclusion

Deep learning is the foundational technology underneath nearly every major AI product in 2026. Understanding it — not just at the API level but in terms of how training works, how architectures are motivated, and where the failure modes lie — is a career-defining skill for any developer working in or adjacent to AI.

The mental model to take away is this: a deep learning model is a very large, differentiable function. Training is the process of iteratively nudging its millions of parameters until the function maps inputs to the outputs you care about. Everything else — CNNs, attention mechanisms, diffusion processes — is a clever way of structuring that function for a particular type of data.

Ready to go deeper? Here is a practical progression: (1) Run the MNIST example in this article on your own machine. (2) Replace the MLP with a CNN using nn.Conv2d layers and observe the accuracy jump. (3) Try transfer learning — load a pre-trained ResNet-18 from PyTorch Hub and fine-tune it on your own image dataset. (4) Explore Hugging Face to fine-tune a small transformer like DistilBERT on a text classification task. (5) For structured learning, fast.ai's Practical Deep Learning course and Andrej Karpathy's YouTube lectures are the highest-signal free resources available today.

References:

- Precedence Research — Deep Learning Market Size, Share, and Trends 2026 to 2035 — https://www.precedenceresearch.com/deep-learning-market — Market sizing and CAGR data for 2026.

- Mordor Intelligence — Deep Learning Market Size to Surpass $296B by 2031 — https://www.globenewswire.com/news-release/2026/02/23/3242764 — Enterprise adoption statistics and growth forecasts.

- IBM Think — The 2026 Guide to Machine Learning — https://www.ibm.com/think/machine-learning — Foundational ML and deep learning concepts.

- DataCamp — How to Learn Deep Learning (2026 Guide) — https://www.datacamp.com/blog/how-to-learn-deep-learning — Architecture overview and learning pathway.

- GeeksforGeeks — Vanishing and Exploding Gradients Problems in Deep Learning — https://www.geeksforgeeks.org/deep-learning/vanishing-and-exploding-gradients-problems-in-deep-learning/ — Pitfall documentation and solution strategies.

- 365 Data Science — What Is Deep Learning? A Complete Beginner’s Guide — https://365datascience.com/trending/what-is-deep-learning/ — Neural network component explanations.

- IBM Think — What is a Transformer Model? — https://www.ibm.com/think/topics/transformer-model — Transformer architecture reference.